If you’re leading a pharmaceutical or biotech company in Raleigh, ask yourself one honest question:

Is your data ready for AI, or are you just storing it?

There’s a critical difference.

AI in pharma brings real opportunity , drug discovery modeling, predictive clinical trials, intelligent manufacturing oversight.

But none of that delivers results without engineered data pipelines behind it.

And in pharmaceutical environments, weak data infrastructure does more than slow progress. It creates compliance risk.

By 2026, the companies leading in Raleigh’s life sciences sector will not simply adopt AI.

They will build the foundation correctly.

Why Raleigh’s Life Sciences Environment Requires Discipline

In Raleigh’s life sciences ecosystem, data flows from trials, labs, and manufacturing systems at the same time. Without engineered pipelines, that flow becomes fragmented.

Read that again.

When clinical trial data, genomics research, compliance records, and production logs move across disconnected systems, the impact shows up fast.

Teams begin to see:

- Duplicate records

- Version inconsistencies

- Incomplete audit trails

- Delayed reporting cycles

And once those issues appear, they don’t stay isolated. They ripple across analytics, compliance reviews, and AI initiatives.

This is not an AI problem.

It is a data engineering problem.

What Pharma Data Engineering Actually Involves

Pharma data engineering goes far beyond database management.

It involves structured systems that:

- Collect regulated data from multiple platforms

- Clean and standardize records

- Secure data with controlled access

- Track lineage from origin to output

- Prepare datasets for AI modeling

Every transformation must be traceable.

Every workflow must be documented.

Every output must be defensible.

Because in pharma, if you cannot explain the data, you cannot defend the decision.

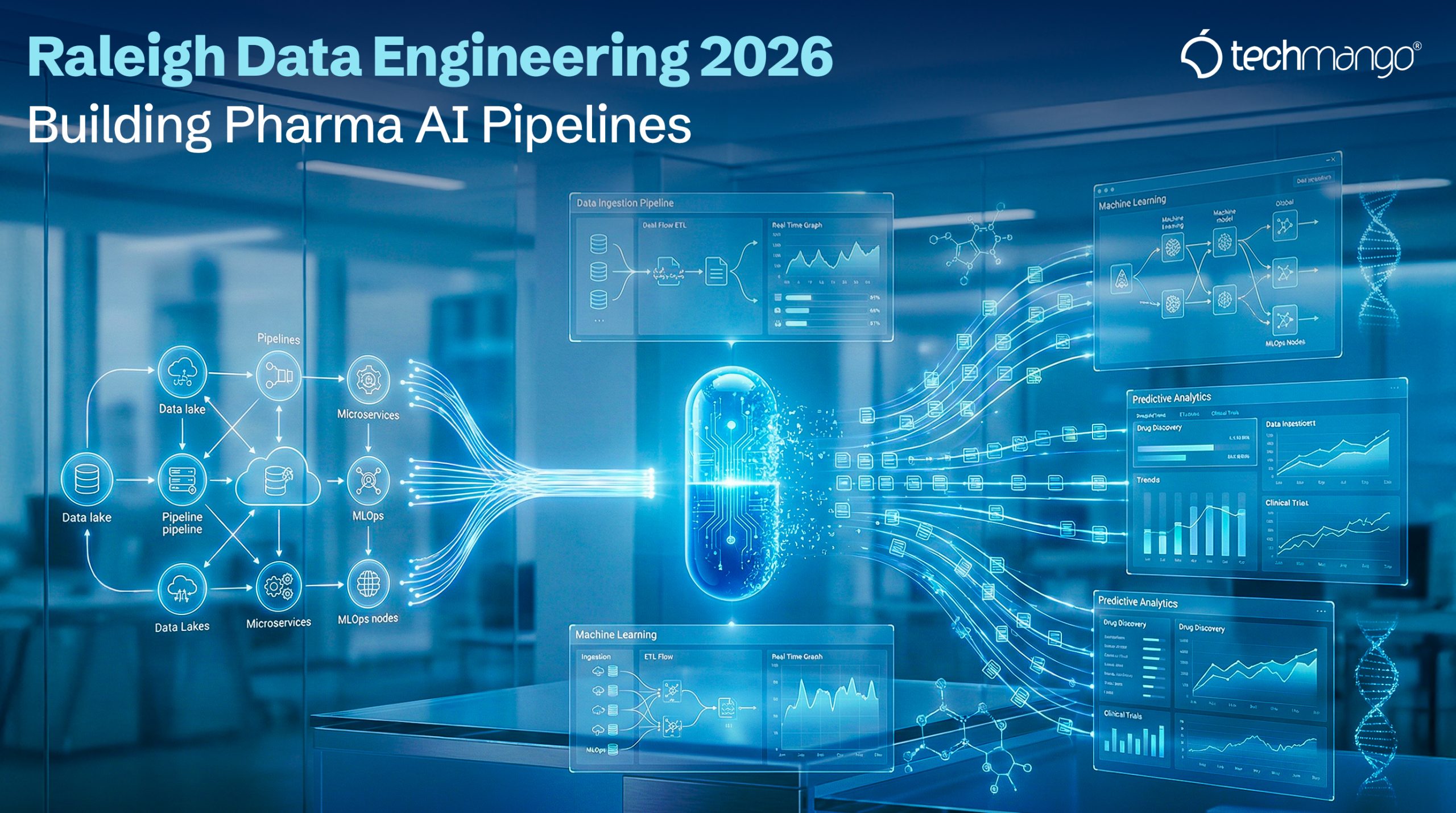

What a Modern Pharma AI Pipeline Looks Like

A properly designed AI pipeline in a pharmaceutical environment includes:

Data Ingestion

Pulling information from EHR systems, lab instruments, ERP platforms, and trial software.

Data Processing

Validating formats, normalizing fields, and preparing structured datasets.

Secure Storage

Encrypted cloud infrastructure with role-based access control.

AI Preparation

Structuring data for predictive models, generative systems, or advanced analytics.

Governance & Monitoring

Tracking data lineage, monitoring model performance, and ensuring regulatory checkpoints are met.

Each stage must be engineered intentionally.

Improvisation does not survive audits.

Why Many Pharma AI Projects Struggle

Industry research consistently shows that a large percentage of AI initiatives fail due to weak data foundations.

In pharmaceutical environments, that often looks like:

- Manual reconciliation in spreadsheets

- Disconnected warehouse environments

- Limited visibility into data transformations

- Poor documentation practices

When pipelines lack structure, AI outputs become difficult to verify.

If results cannot be verified, they cannot be trusted.

Compliance Is Part of the Architecture

Pharma AI pipelines must support:

- HIPAA data protection standards

- FDA 21 CFR Part 11 requirements

- Controlled access permissions

- Complete audit trails

- Reproducible transformations

The hard truth : Compliance cannot be added later.

It must be built into the pipeline from the first architectural decision.

Waiting until deployment to address governance creates risk that compounds over time.

What 2026 Means for Pharma Companies in Raleigh

By 2026, pharmaceutical organizations will depend heavily on:

- AI-assisted drug discovery

- Predictive clinical modeling

- Real-time manufacturing analytics

- Automated regulatory reporting

Companies that treat data engineering as background IT support will struggle to support these initiatives.

Those that treat it as core infrastructure will operate with confidence and clarity.

That difference begins at the architecture level.

Why Structured Engineering Models Make Sense

Building enterprise-grade pharma pipelines entirely in-house requires significant investment. Senior data architects and compliance-aware engineers command high salaries, and regulated environments demand multidisciplinary expertise across data, security, and governance.

That’s why many organizations turn to structured hybrid engineering models.

These models bring together:

- U.S.-aligned architectural leadership

- Dedicated engineering teams

- Clearly defined compliance workflows

- Continuous monitoring and documentation

This approach isn’t about cost alone.

It’s about accountability.

It’s about structure.

It’s about disciplined execution in environments where precision matters.

How Techmango Supports Raleigh Pharma Organizations

Pharmaceutical companies in Raleigh don’t need generic data support. They need disciplined, compliance-aware engineering built for regulated environments.

That’s where Techmango comes in.

We partner with U.S. life sciences organizations to design and implement secure, AI-ready data infrastructure aligned with pharmaceutical standards.

Our engagement begins with structured architecture workshops. Before any code is written, we define:

- Data sources and system dependencies

- Security boundaries and access controls

- Regulatory obligations

- Integration touchpoints

- Performance benchmarks

Once the foundation is clear, our engineering teams build:

- Secure data ingestion pipelines

- Cloud-native storage environments

- Governance and audit tracking frameworks

- AI integration layers aligned with compliance standards

Every deployment is documented.

Every transformation is traceable.

Every system is designed with regulatory alignment in mind.

We approach pharma data engineering as long-term infrastructure , not a temporary implementation.

For organizations in Raleigh preparing for 2026, that means data systems built with structure, visibility, and controlled AI adoption from day one.

The Question That Matters

AI can generate insights.

But engineered systems built on structured data architecture deliver dependable outcomes.

The pharmaceutical organizations that lead over the next several years will not simply experiment with models. They will invest early in disciplined data engineering and compliant infrastructure designed to support intelligent systems responsibly.

This is the difference you need to keep in mind.

When leadership teams treat data engineering as core infrastructure , not background IT , AI initiatives move forward with clarity, audit readiness, and operational control.

That’s where Techmango comes in.

Techmango works with U.S. life sciences organizations to design secure, compliant data systems that support long-term AI adoption in regulated environments like Raleigh.

If you are evaluating modernization efforts, planning new AI initiatives, or reviewing compliance exposure, this is the right time to assess your foundation.

Strong AI outcomes begin with structured engineering decisions.

Talk to our experts.

Let’s design it correctly from the start.